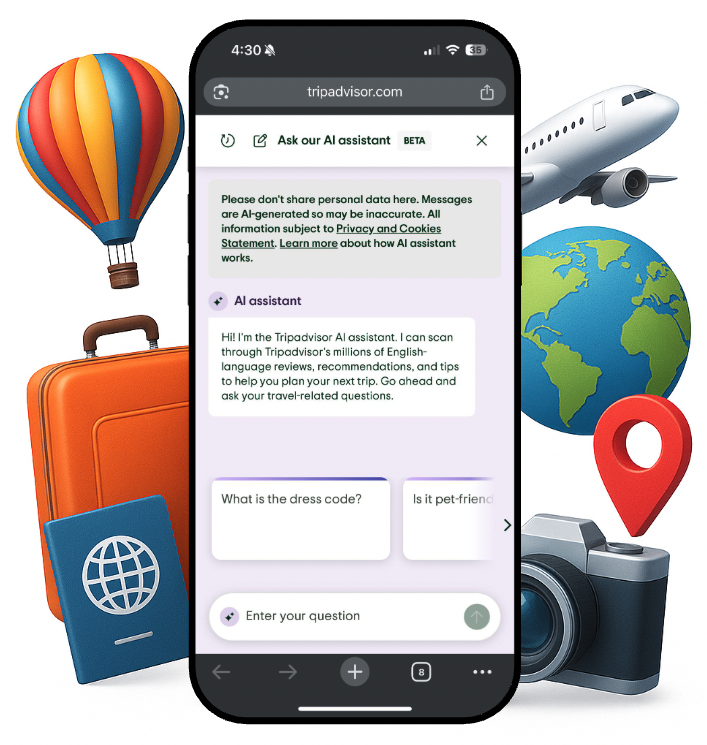

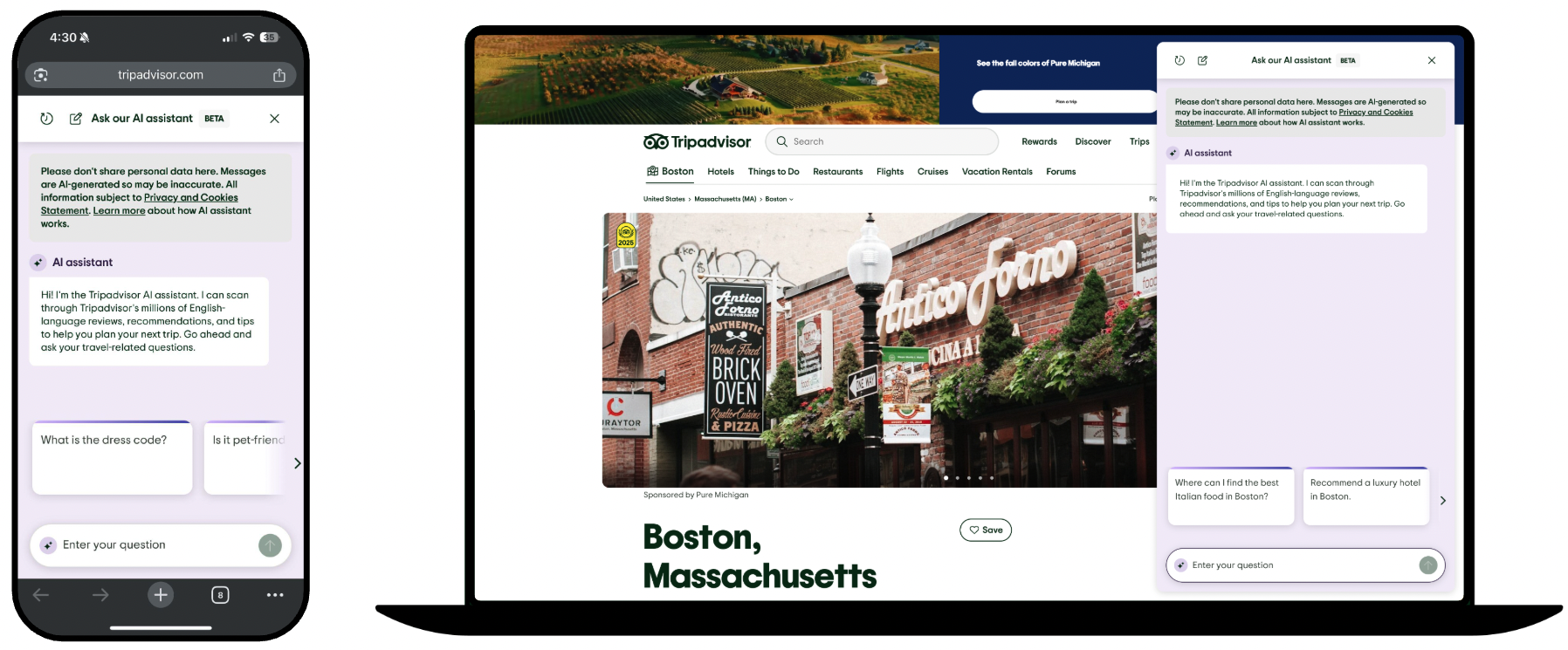

Reimagining Trip Planning with AI

Led research to evaluate and shape an AI-powered travel assistant designed to deliver personalized recommendations across the travel journey.

Impact

📈 +48% increase in return rate to the AI Assistant

⭐ 39% → 55% improvement in overall satisfaction

👍 69% → 82% increase in response upvotes

🔁 69% of users likely to reuse the assistant

🧭 Research insights informed TripAdvisor’s exploration of AI-driven travel planning

Role

Lead UX Researcher (AI Strategy)

Methodologies: (Mixed-method) CSAT surveys, usability tests and moderated concept testing interviews

Goal

Evaluate the AI Assistant’s usability, accessibility, and overall experience by measuring user satisfaction, ease of use, information reliability, and response quality (accuracy, relevance, clarity, and helpfulness) to inform iterative design improvements and product refinement.

Duration

9 months

Contributions: Design, Product and AI/Engineering

Problem & Opportunity

Travel planning is often fragmented across multiple tools, requiring travelers to search for inspiration, compare recommendations, and organize itineraries across different platforms. TripAdvisor saw an opportunity to simplify this process by exploring an AI-powered assistant that could provide personalized, context-aware recommendations throughout the travel journey. The vision for Ollie, the TripAdvisor AI Assistant, was to act as a conversational companion that could support travelers from early inspiration and trip planning to real-time exploration while traveling. To evaluate this opportunity and ensure the experience aligned with real traveler needs, our team conducted multiple rounds of research to understand user behaviors, expectations, and trust in AI-powered travel assistance.

Research Goals

To understand how travelers plan trips and explore the potential of an AI-powered assistant, our research focused on uncovering key behaviors, pain points, and opportunities to create value. Specifically, we asked:

👣 Traveler behaviors: How do users currently discover, plan, and organize trips? What tools and strategies do they rely on?

⚠️ Pain points: What frustrations or inefficiencies impact their planning experience the most?

🤖 Trust & adoption: How do users perceive AI recommendations, and what drives confidence in the assistant?

💡 Opportunity validation: Which features or interaction patterns would deliver the most value for users and inform TripAdvisor’s AI travel strategy?

Research Approach

Methodologies

CSAT Surveys: Measured overall satisfaction, ease of use, and perceived helpfulness to identify key pain points and track improvements.

Usability Tests: Observed users interacting with early prototypes to uncover friction and inform design iterations.

Moderated Concept Testing Interviews: Explored user reactions to AI assistant concepts, capturing feedback on trust, usefulness, and desired features.

Participants

🧳 All traveler segments

Key Insights

Insight #1 — Travelers want guidance but still want control ⚖️

Evidence: Users appreciated the idea of an AI assistant but hesitated to rely solely on automated recommendations. Many expressed the need to review, adjust, or override suggestions.

Implication: The AI assistant should act as a collaborative planning partner, giving users control over decisions while offering helpful guidance.

Insight #3 — Contextual, personalized suggestions drive engagement 📍

Evidence: Users responded positively to recommendations tailored to their location, past preferences, and travel style. Generic suggestions were often ignored.

Implication: AI interactions should leverage contextual signals and personalization to increase perceived value and engagement.

Insight #2 — Trust is essential for AI adoption 🤖

Evidence: Participants were cautious about recommendations from an AI system, especially when sources or reasoning weren’t clear. Lack of transparency lowered confidence in the assistant.

Implication: The design should include transparent reasoning and explainable recommendations to build user trust.

Insight #4 — Seamless, end-to-end travel support reduces friction 🛫

Evidence: Travelers found switching between multiple apps or tools frustrating. They valued features that could guide them through planning, booking, and on-the-go exploration in one place.

Implication: The assistant should support multiple stages of the travel journey, reducing friction across planning and in-trip experiences.

Product Implications

Based on our research, the AI Assistant should be designed to support travelers in a collaborative, trustworthy, and context-aware way. Key implications for the product include:

Transparent AI recommendations

Users need to understand why the assistant makes specific suggestions. Including source information, reasoning, or context builds trust and confidence in AI-driven guidance.User-controlled refinement

Travelers want to adjust, filter, or override suggestions. Providing control over recommendations ensures users feel empowered and maintains engagement across the trip-planning journey.Contextual, personalized conversations

AI interactions should leverage location, past behavior, and preferences to deliver relevant recommendations. A conversational interface makes exploration feel natural and supports multiple stages of the travel journey, from inspiration to on-the-go decisions.End-to-end planning support

By integrating planning, booking, and in-trip exploration in one experience, the assistant can reduce friction and encourage users to engage repeatedly, driving both satisfaction and retention.Feedback loops for continuous improvement

Capturing user feedback (e.g., upvotes on suggestions) enables the AI to learn and improve over time, increasing perceived helpfulness and user loyalty.

Results

As the AI Assistant grew across Tripadvisor’s platform, research became its compass. The usability test and each round of the CSAT survey told a new chapter of the product’s evolution, uncovering pain points, validating wins, and shaping smarter iterations. With every wave of feedback, the Assistant became sharper, faster, and more helpful. The impact of research wasn’t just seen in reports, it was reflected in the numbers, the performance, and the way users engaged. Some of the most notable outcomes included:

Overall satisfaction rose from 39% to 55%, with “helpful responses” cited more often as the top reason (27% → 49%)

Dissatisfaction dropped from 43% to 32%

Ease of use improved from 58% to 72%, while reported difficulty fell from 20% to 11%

69% of users are “very likely” or “likely” to use the AI Assistant again

We have also noticed a significant improvement in the percentage of users upvoting responses post launch (69 → 82%)

+48% lift in return rate to the AI Assistant (8% vs. 5%)

Reflection

This project reinforced the importance of trust and transparency when introducing AI into decision-making. Even when an AI assistant is helpful, users need clear reasoning and control to feel confident in its recommendations.

I also learned how contextual and personalized interactions significantly impact engagement, and that designing for multiple stages of the travel journey requires balancing guidance with user autonomy.

🔍 For future iterations, I would explore:

Dynamic, explainable AI suggestions that adapt to traveler behavior in real time.

Enhanced feedback mechanisms, allowing users to refine recommendations and train the assistant over time.

Longitudinal engagement studies to measure how sustained use affects satisfaction and loyalty throughout the travel experience.